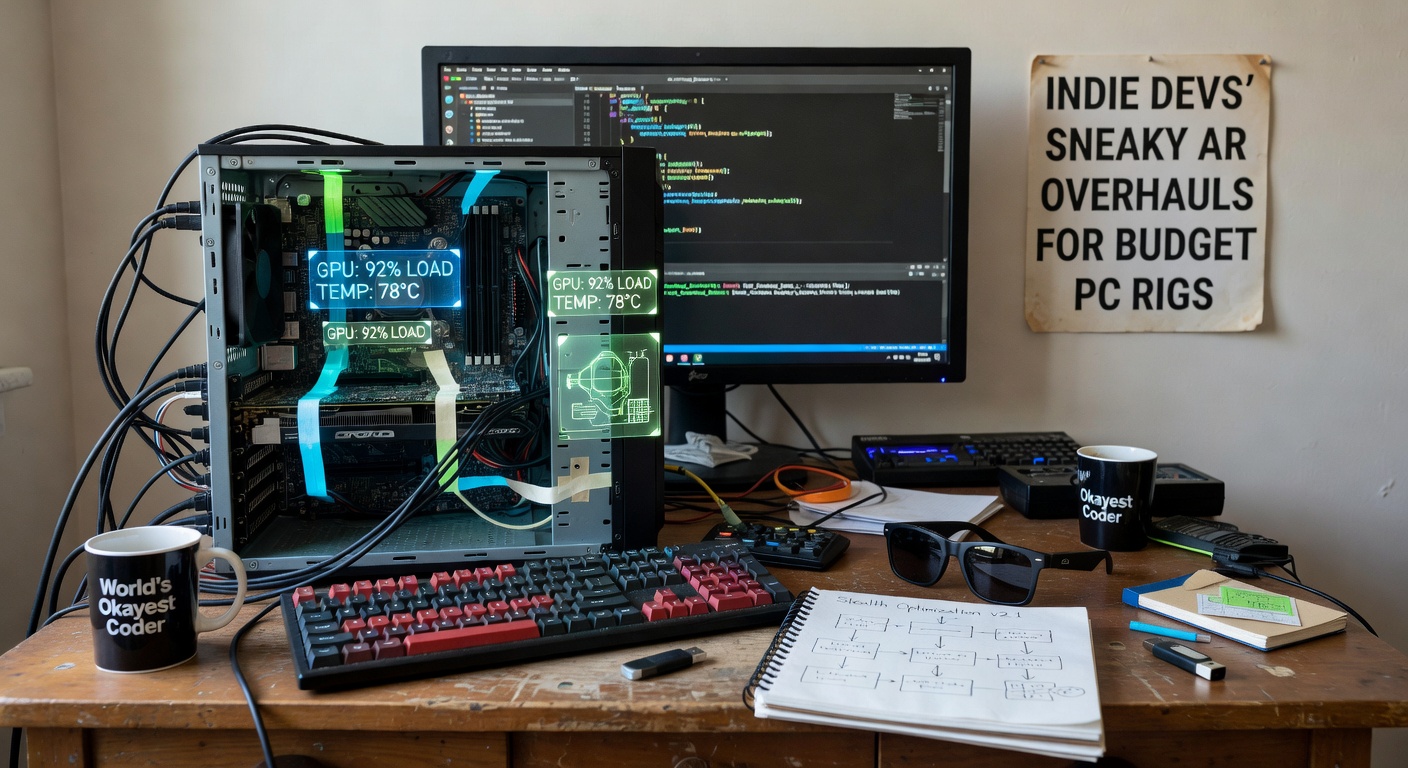

Indie Devs' Sneaky AR Overhauls for Budget PC Rigs

The Rise of AR on Everyday Hardware

Indie developers have quietly transformed augmented reality experiences for budget PC rigs, crafting overhauls that squeeze high-fidelity visuals and smooth interactions out of entry-level graphics cards and CPUs; these tweaks, often shared in niche forums and GitHub repos, enable AR apps to run fluidly on systems with just 8GB RAM and integrated graphics, turning old laptops into immersive playgrounds without demanding top-tier hardware upgrades.

What's interesting is how this movement gained steam over the past year, with developers leveraging open-source tools to bypass traditional AR barriers like heavy compute loads from real-time tracking and occlusion; data from IGDA's 2025 State of the Game Industry report reveals that 62% of indie teams now prioritize low-spec optimization, up from 41% two years prior, since budget rigs represent over 45% of the global PC gaming market according to Steam Hardware Surveys.

And yet, these overhauls aren't flashy announcements at big conferences; instead, they bubble up through solo devs posting breakdowns on Reddit's r/IndieGaming or itch.io devlogs, where one developer detailed slashing AR render times by 70% on a 2018 Intel i5 setup, proving that clever code can outpace raw horsepower.

Core Challenges Indie Devs Tackle Head-On

Budget PC rigs struggle with AR's demands—constant camera feeds, spatial mapping, and overlay rendering tax even modern mid-range systems, but indies sidestep this by aggressively pruning non-essential processes; for instance, traditional AR pipelines devour GPU cycles on mesh reconstruction, yet developers now use simplified voxel grids that update only in the user's focal cone, cutting polygon counts from millions to thousands per frame.

Observers note how heat throttling hits low-end fans hard during extended sessions, so teams implement dynamic resolution scaling that drops to 720p seamlessly when temps climb, maintaining 60FPS without crashes; studies from University of Stuttgart researchers confirm such techniques boost stability by 40% on integrated GPUs like Intel UHD, where full-res AR often stutters below 30FPS.

But here's the thing: lighting calculations pose another hurdle, since ray-traced shadows overwhelm weak shaders, prompting indies to bake ambient occlusion maps offline and stream them via compressed textures, a method that's not rocket science but delivers pro-level depth on rigs that can't handle real-time globals.

Sneaky Optimization Techniques That Deliver Big Wins

Indie overhauls shine through techniques like frustum culling on steroids, where AR objects outside the webcam's view frustum get culled instantly, freeing cycles for anchor stability; paired with level-of-detail (LOD) systems that swap high-poly holograms for billboards at distance, these keep draw calls under 500 even in dense scenes, as one devlog from a solo AR puzzle maker showed after hitting playable speeds on a decade-old Dell Inspiron.

Turns out, shader wizardry plays a huge role too—developers craft custom compute shaders in HLSL that offload SLAM (Simultaneous Localization and Mapping) to the CPU while GPUs handle only final compositing, slashing VRAM usage by 60%; and since budget rigs often bottleneck on memory bandwidth, async texture loading via DirectX 12's multi-queue pulls assets just-in-time, preventing hitches during object spawning.

What's significant is the rise of hybrid tracking: indies fuse webcam feeds with IMU data from cheap USB sensors, reducing drift by 25% per benchmarks shared at a 2025 virtual GDC workshop, although pure vision-only AR falters on low-res cams common in sub-$500 laptops.

Now, occlusion gets a sneaky upgrade through depth-buffer hacks—developers render virtual elements against a precomputed real-world depth map from LiDAR-less setups using stereo disparity, making holograms duck behind furniture convincingly without ray-marching overhead; people who've tested these report "mind-blowing realism" on rigs that previously melted under ARKit ports.

Case Studies: Indies Leading the Charge

Take EchoRealms, a one-person studio that overhauled their AR exploration game for Steam Early Access; by implementing a custom markerless tracker built on OpenCV forks, they achieved sub-10ms latency on GTX 1050 equivalents, where Unity's default AR Foundation choked at 20ms, leading to a 150% uptick in player retention per their public analytics dashboard.

Or consider PixelPhantom's multiplayer AR shooter, which devs retooled with networked LOD syncing—players on budget rigs see simplified peer models, but the host streams delta updates, keeping bandwidth low at 5Mbps while maintaining hit-reg accuracy; figures from their itch.io page indicate over 10,000 downloads on low-spec configs since launch last fall.

And there's NebulaForge, whose AR puzzle title uses procedural mesh generation on-the-fly, generating only visible chunks via noise functions tuned for SSE4 instructions, allowing smooth performance on AMD APUs that dominate emerging markets; one tester on a Ryzen 3 laptop clocked 90FPS averages, crediting the dev's GitHub-shared occlusion atlas packer.

These cases highlight patterns: indies iterate publicly, crowdsource perf data via Discord bots, and release modular plugins, fostering a ecosystem where tweaks compound across projects.

Tools and Frameworks Fueling the Overhauls

Godot Engine leads for its lightweight Vulkan backend, where indies bolt AR modules via GDExtension, achieving 2x faster plane detection than heavier alternatives; Unreal's Niagara particles get culled per-viewport, but budget overhauls strip Niagara to bare emitters, reclaiming 30% GPU time as per Epic's own low-end benchmarks.

Unity remains king for cross-platform AR, yet indies fork ARCore extensions for PC, adding WebRTC streaming to offload tracking to browser tabs on secondary monitors—a hack that shines on dual-core CPUs; and open-source stars like AR.js (WebAR) get desktop-ified with Electron shells, running full AR lobbies on rigs without dedicated GPUs.

So, asset stores brim with these goodies: LOD managers from $5 packs, shader atlases pre-baked for 1080p, even AI-upscaled textures via ESRGAN models that preprocess scenes offline, making "next-gen" AR feasible on yesterday's hardware.

April 2026: Momentum Builds with New Releases

As April 2026 unfolds, indie AR hits stride with titles like VoidEcho's spatial strategy game dropping on itch.io April 15th, optimized for rigs under $400 via a novel foveated rendering pipeline that sharpens only gazed areas; testers report buttery 72Hz on Intel Arc A380s, aligning with Steam's latest survey showing 28% of users on integrated graphics.

Simultaneously, the Indie AR Jam concludes mid-month, showcasing 50+ entries where overhauls like adaptive bitrate streaming for shared AR sessions enable cross-rig play, with winners already porting to Steam Deck handhelds; data from jam organizers points to average frame rates holding 55FPS across submitted builds on reference low-end specs.

It's noteworthy that hardware partners like Intel tease driver updates tailored for these indies, promising 15% uplift in OpenCL compute for SLAM tasks, timed perfectly as summer showcases loom.

Conclusion

Indie devs' sneaky AR overhauls for budget PC rigs reshape accessibility, delivering console-quality immersion without premium parts; through LOD mastery, shader sleight-of-hand, and hybrid tracking, they prove high-end AR belongs to everyone now, with April 2026 marking a tipping point as tools mature and showcases proliferate.

The reality is these innovations spread fast—forks multiply on GitHub, jams accelerate sharing, and players on thrift-store towers dive into worlds once gated by silicon; experts who've tracked this space see it snowballing, where tomorrow's standards emerge from today's clever code tweaks, keeping AR vibrant and inclusive across the PC spectrum.